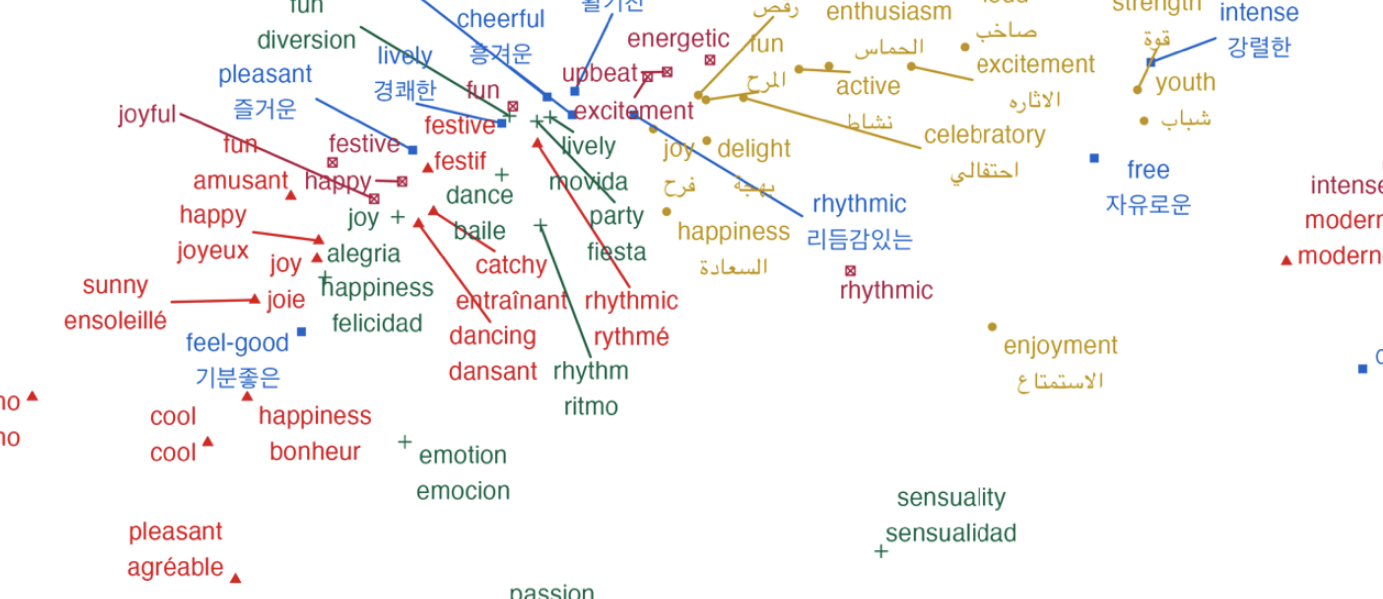

Most of us would agree that an upbeat song written on a major scale with lyrics about friendship makes us feel happy (e.g. one of the pop songs most rated as inducing “joy” is “Wannabe” by Spice Girls, according to the EMMA website). This is not surprising, given that a large body of research in music psychology has established that a fast tempo, major modality, and positive lyrics are associated with positive emotions.

But how do songs make us feel when their constituent elements convey different, or even conflicting emotions – for instance, a fast rhythmic drum base and a bright major melody, paired with lyrics about a painful breakup? Do we feel a linear sum of these emotions, or do combinations of emotional cues evoke complex emotions beyond this sum? How do individual differences like personalities, musical genre preferences, and cultural backgrounds shape these responses?

Studies that have examined the combined effects of incongruent emotions in musical components on overall perceived or evoked emotion are sparse. This is presumably due to technological limitations in manipulating audio files (e.g. cleanly separating songs into stems, or blurring lyrics) and the high cost of recruiting participants to annotate the many stimuli that result from such manipulations.

Although many studies emphasize both lyrical and acoustic features when investigating song emotion, most work relies on computational Music Emotion Recognition (MER) models that prioritize predictive performance over psychological interpretability.

This project aims to bridge this gap by:

- Conducting large-scale empirical investigations comparing emotions from isolated stems with full songs.

- Comparing how MER models and humans process emotions from different song cues.

- Investigating how cultural background, listening habits, and personality influence song-emotion perception.